Federal Appeal Challenges First Amendment Protection for AI-Generated CSAM

Federal Appeal Challenges First Amendment Protection for AI-Generated CSAM

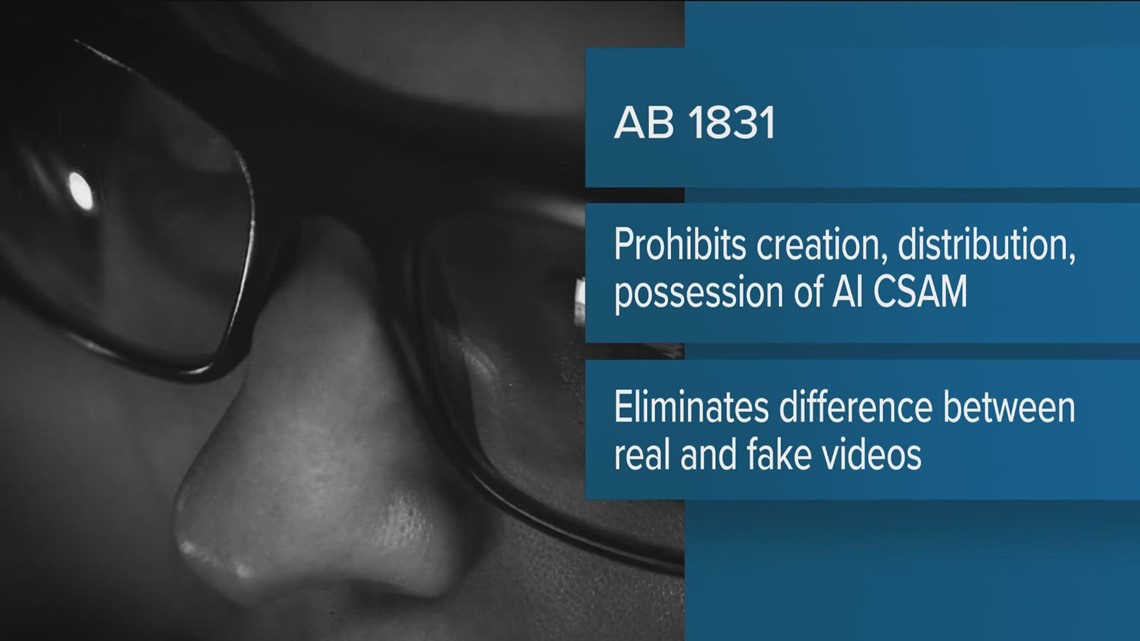

The legal landscape surrounding AI-generated Child Sexual Abuse Material (CSAM) is currently under significant scrutiny, particularly in the context of constitutional protections. In a recent case, federal prosecutors are appealing a judge's decision that private possession of AI-generated CSAM is protected under the First Amendment of the U.S. Constitution. This case involves Steven Anderegg, who was charged with creating over 13,000 AI-generated CSAM images and sharing some with a minor. The judge ruled that the possession of such material in one's own home is protected, citing the 1969 Supreme Court ruling in Stanley v. Georgia, which established that the state cannot criminalize private possession of obscene material.

However, prosecutors argue that AI-generated CSAM falls under the 2003 Protect Act, which criminalizes "obscene visual representations of the sexual abuse of children." The outcome of this appeal could have profound implications for how AI-generated CSAM is treated legally, especially as the misuse of AI technology to create such content becomes more prevalent. The case is pivotal in determining whether existing laws can effectively address the challenges posed by AI-generated obscene materials.