Safety Risks in Retrieval-Augmented Generation (RAG) Systems for LLMs

RAG LLM Safety Risks

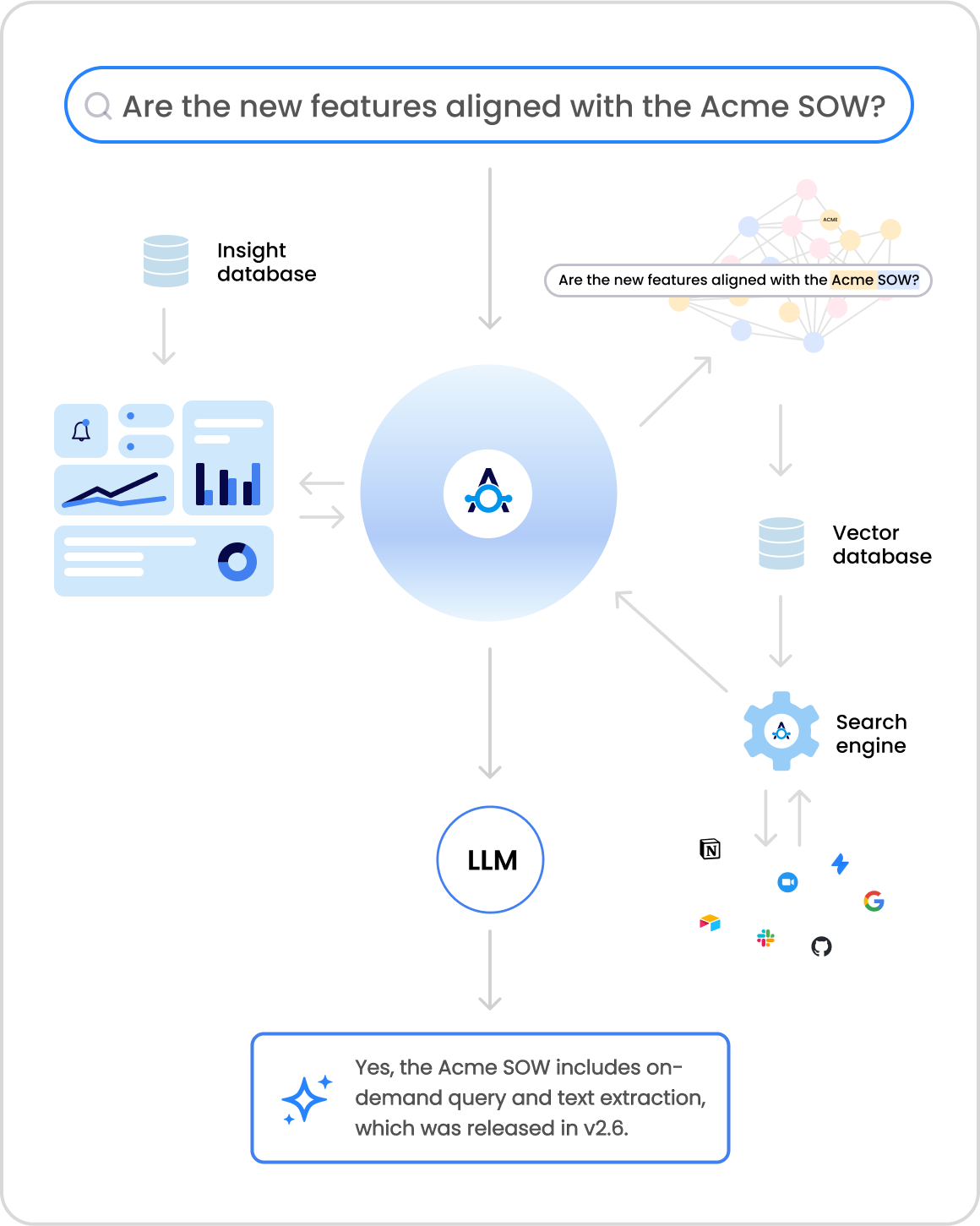

Retrieval-Augmented Generation (RAG) systems enhance the capabilities of Large Language Models (LLMs) by dynamically retrieving and integrating external data. However, this integration introduces significant safety risks that are often overlooked. Below, we outline the key vulnerabilities and their implications:

1. Data Poisoning

One of the most critical risks in RAG systems is data poisoning, where attackers inject malicious or misleading information into the external knowledge bases that the model relies on. This can lead to the generation of harmful or incorrect outputs, even if the model itself is well-aligned and safe. For example, a compromised medical database could cause a healthcare LLM to provide dangerous treatment recommendations.

2. Information Leakage

RAG systems aggregate data from diverse sources, which can inadvertently expose sensitive or proprietary information. This information leakage occurs when the retrieval mechanism fails to distinguish between trusted internal repositories and unvetted external sources, leading to the unintended disclosure of confidential data.

3. Prompt Injection

Prompt injection attacks exploit the implicit trust that LLMs place in their input. By embedding malicious instructions within seemingly benign queries, attackers can bypass safety protocols and manipulate the model’s outputs. This technique is particularly effective in RAG systems, where the model’s reliance on external data can be exploited to introduce harmful content.

4. Retrieval-Based Exploits

Unlike traditional jailbreaks that rely on prompt manipulation, retrieval-based exploits target the model’s retrieval pipeline. Attackers can tamper with the external data sources, embedding malicious content that the model retrieves and incorporates into its responses. This method is harder to detect because it operates at the systemic level, bypassing traditional input-output filters.

5. Incremental Poisoning

Incremental poisoning involves introducing small, seemingly innocuous changes to the external data over time. This gradual approach avoids triggering anomaly detection systems, allowing malicious entries to accumulate unnoticed. The model increasingly relies on corrupted data, amplifying the attack’s impact.

6. Contextual Masking

Attackers can embed malicious content within highly relevant or authoritative documents, making it difficult to detect. For instance, a compromised technical manual might include subtle errors that mislead users without raising immediate suspicion. This contextual masking makes it challenging to identify and mitigate the risks.

7. Real-Time Validation Challenges

RAG systems continuously retrieve and integrate new information, making real-time validation of data integrity a significant challenge. Traditional validation methods are often insufficient to detect evolving threats, necessitating the development of more robust, dynamic validation systems.

8. Red-Teaming Limitations

Existing red-teaming methods, which are effective in non-RAG settings, are less effective in RAG systems. Adversarial prompts that can jailbreak a standard LLM often fail in RAG settings because the retrieval process introduces additional variables. This highlights the need for red-teaming methods specifically tailored for RAG LLMs.

Conclusion

While RAG systems offer enhanced capabilities for LLMs, they also introduce complex safety risks that require a multi-layered defense strategy. Organizations must implement robust validation mechanisms, real-time anomaly detection, and strict data provenance protocols to mitigate these risks. Additionally, the development of specialized red-teaming methods and zero-trust architectures is essential to ensure the security and reliability of RAG-powered LLMs.