DeepSeek-R1

by Hangzhou DeepSeek CorporationDeepSeek-R1 is a high-performance AI reasoning model designed to excel in tasks like math, coding, and natural language reasoning with minimal labeled data.

DeepSeek-R1: High-Performance AI Reasoning Model

What is DeepSeek-R1?

DeepSeek-R1 is a high-performance AI reasoning model developed by Hangzhou DeepSeek Corporation. It is designed to match the capabilities of OpenAI's o1 official version, excelling in tasks such as math, coding, and natural language reasoning. The model leverages large-scale reinforcement learning techniques, achieving exceptional performance with minimal labeled data. DeepSeek-R1 is open-sourced under the MIT License and supports model distillation for training other models.

Key Features of DeepSeek-R1

- High-Performance Reasoning: Excels in tasks like math, coding, and natural language reasoning, matching the performance of OpenAI's o1 official version.

- Reinforcement Learning with Minimal Data: Trained using reinforcement learning techniques with minimal labeled data, significantly enhancing reasoning capabilities.

- Model Distillation Support: Allows users to distill the model's outputs to train smaller models tailored for specific applications.

- Open Source with Flexible Licensing: Released under the MIT License, enabling free use, modification, and commercialization.

Technical Principles of DeepSeek-R1

- Reinforcement Learning-Driven Reasoning: DeepSeek-R1 extensively applies reinforcement learning during post-training, significantly improving reasoning capabilities with minimal labeled data.

- Chain-of-Thought (CoT) Reasoning: Utilizes long-chain reasoning techniques, enabling the model to decompose complex problems into multi-step logical processes, enhancing efficiency in complex tasks.

- Model Distillation: Supports model distillation, allowing developers to transfer DeepSeek-R1's powerful reasoning capabilities into lighter models for various applications.

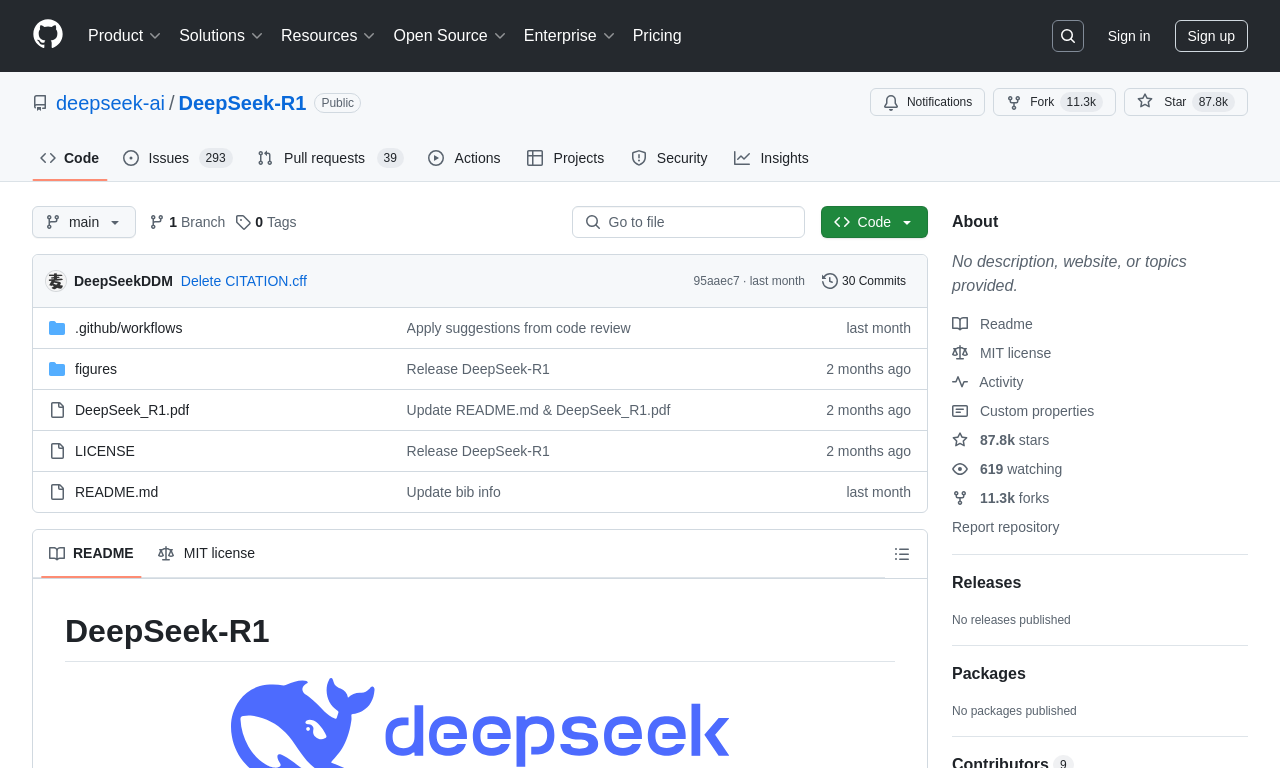

Project Links

- GitHub Repository: https://github.com/deepseek-ai/DeepSeek-R1

- HuggingFace Model Hub: https://huggingface.co/deepseek-ai/DeepSeek-R1

- Technical Paper: https://github.com/deepseek-ai/DeepSeek-R1/blob/main/DeepSeek_R1.pdf

How to Use DeepSeek-R1

- Official Website Experience: Visit the DeepSeek official website or app, enable the "Deep Thinking" mode, and directly use DeepSeek-R1 for various reasoning tasks.

- API Service: DeepSeek-R1 offers API services, accessible by setting

model='deepseek-reasoner'. - Pricing:

- Input tokens: ¥1 per million (cache hit) / ¥4 per million (cache miss)

- Output tokens: ¥16 per million

Applications of DeepSeek-R1

- Research & Development: DeepSeek-R1 excels in complex tasks like math reasoning, code generation, and natural language processing, making it ideal for scenarios requiring large-scale reasoning and logical processing.

- Natural Language Processing (NLP): Provides strong technical support for NLP tasks such as natural language understanding, automated reasoning, and semantic analysis.

- Enterprise Intelligence: Enterprises can integrate DeepSeek-R1 into their products for applications like intelligent customer service, automated decision-making, and personalized recommendations.

- Education & Training: Serves as an educational tool to help students master complex reasoning methods, enhancing understanding in subjects like math and programming.

- Data Analysis & Decision Support: Handles complex logical reasoning tasks, supporting data analysis, market forecasting, and strategic decision-making in businesses.

Model Capabilities

Model Type

language

Supported Tasks

Math Reasoning

Coding

Natural Language Reasoning

Model Distillation

Tags

AI Reasoning

Reinforcement Learning

Model Distillation

Natural Language Processing

Math Reasoning

Coding

Open Source

MIT License

Chain-of-Thought Reasoning

API Integration

Usage & Integration

Pricing

paid

Input tokens: ¥1 per million (cache hit) / ¥4 per million (cache miss), Output tokens: ¥16 per million

API Access

Available

License

Open Source

MIT

Screenshots & Images

Primary Screenshot

Additional Images

Stats

166

Views

0

Favorites