F5-TTS

by Shanghai Jiao Tong UniversityWhat is F5-TTS?

F5-TTS is an open-source, high-performance text-to-speech (TTS) system developed by Shanghai Jiao Tong University. It utilizes flow matching and diffusion transformer (DiT) technology to generate natural, fluent, and accurate speech without additional supervision. F5-TTS supports zero-shot learning, multi-language synthesis (including Chinese and English), and effective long-text synthesis. It also features emotion control and speed control, allowing users to adjust the emotional expression and playback speed of the synthesized speech. Trained on a large-scale dataset of 100,000 hours, F5-TTS demonstrates excellent performance and generalization capabilities. It is widely applicable in scenarios such as audiobooks, voice assistants, language learning, news broadcasting, and game dubbing, providing robust speech synthesis for both commercial and non-commercial purposes.

Main Features of F5-TTS

- Zero-shot Voice Cloning: Mimic any voice without specific speaker data.

- Speed Control: Adjust the generation speed of the speech for precise control over playback speed.

- Emotion Control: Control the emotional expression of the synthesized speech, making machine-generated speech more human-like.

- Long-text Synthesis: Support continuous speech synthesis for long texts, suitable for reading and broadcasting lengthy content.

- Multi-language Support: Process and generate speech in multiple languages, including Chinese and English, with excellent multi-language synthesis capabilities.

- Large-scale Data Training: Trained on a dataset of 100,000 hours, ensuring the model's generalization ability and the naturalness of the synthesized speech.

Technical Principles of F5-TTS

- Flow Matching: F5-TTS trains the model based on flow matching objectives, transforming a simple probability distribution (e.g., standard normal distribution) into a complex probability distribution approximating the data distribution. This involves training the model across the entire flow step and data range, ensuring the transformation from the initial to the target distribution.

- Diffusion Transformer (DiT): As the backbone network, DiT processes sequence data, gradually removing noise during the generation process to produce clear speech signals.

- ConvNeXt V2: F5-TTS improves text representation based on ConvNeXt V2, making it easier to align with speech features and enhancing the quality and naturalness of speech synthesis.

- Sway Sampling Strategy: A flow step sampling strategy used during inference, based on non-uniform sampling to improve the model's performance and efficiency, especially in the early stages of speech generation, helping the model more accurately capture the target speech's outline.

- End-to-end System Design: F5-TTS features a simple and direct system design, from text input to speech output, omitting traditional complex designs such as phoneme alignment and duration prediction, simplifying the model's training and inference processes.

Project Addresses of F5-TTS

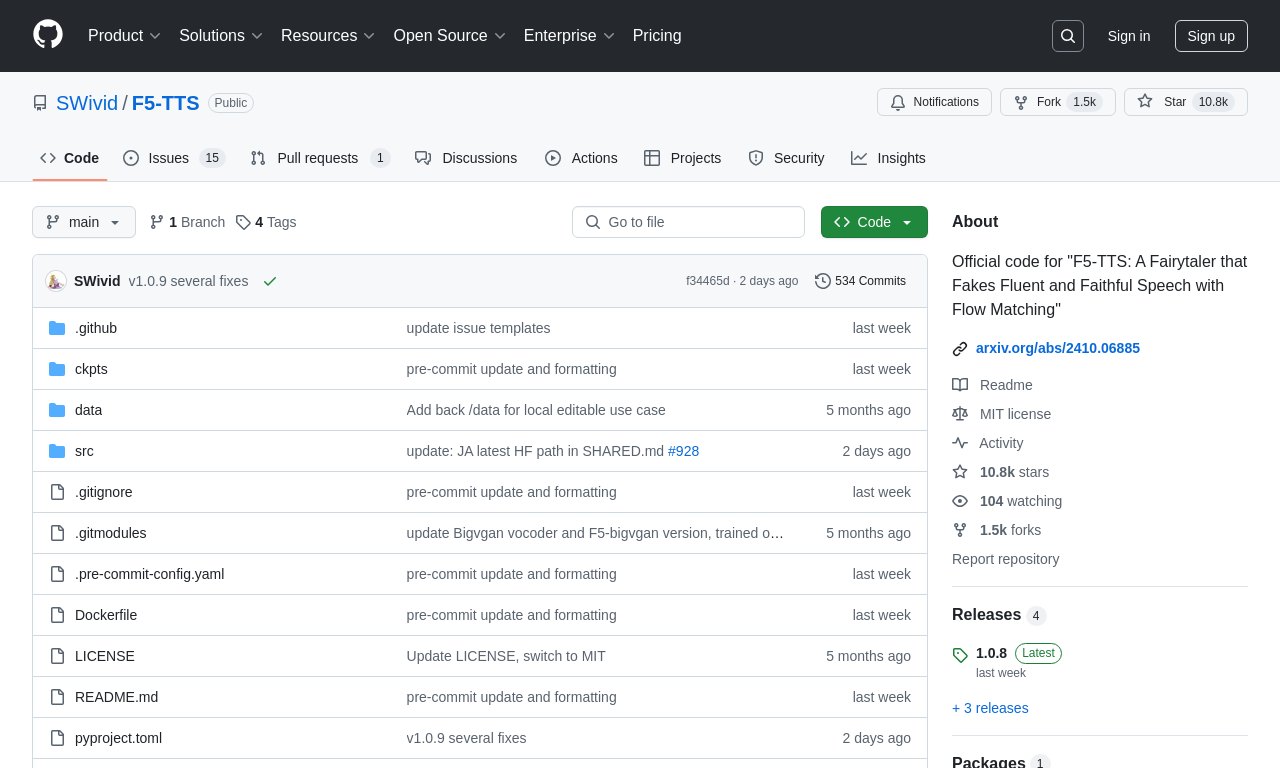

- GitHub Repository: https://github.com/SWivid/F5-TTS

- HuggingFace Model Library: https://huggingface.co/SWivid/F5-TTS

- arXiv Technical Paper: https://arxiv.org/pdf/2410.06885

- Online Demo: https://huggingface.co/spaces/mrfakename/E2-F5-TTS

Application Scenarios of F5-TTS

- Audiobooks and Podcasts: Convert e-books or articles into audiobooks for visually impaired users or those who enjoy listening to books.

- Voice Assistants and Chatbots: Provide natural-sounding voice feedback for smart devices and online services, enhancing user experience.

- Language Learning and Education: Assist learners in practicing pronunciation and listening, offering tools for language learning.

- News and Media: Automatically generate voice versions of news reports, providing automated content production for radio stations and online news platforms.

- Customer Service: Use in customer service systems to provide automatic voice responses, improving customer experience.

Model Capabilities

Usage & Integration

Screenshots & Images