Goku

by University of Hong Kong, ByteDanceGoku is a state-of-the-art video generation model designed for joint image and video generation, supporting text-to-video, image-to-video, and text-to-image modes.

What is Goku?

Goku is a state-of-the-art video generation model jointly developed by the University of Hong Kong and ByteDance. It is designed for joint image and video generation, supporting multiple modes including text-to-video, image-to-video, and text-to-image. Built on a rectified flow Transformer framework, Goku excels in generating high-quality, coherent outputs while significantly reducing production costs, especially in advertising.

Key Features

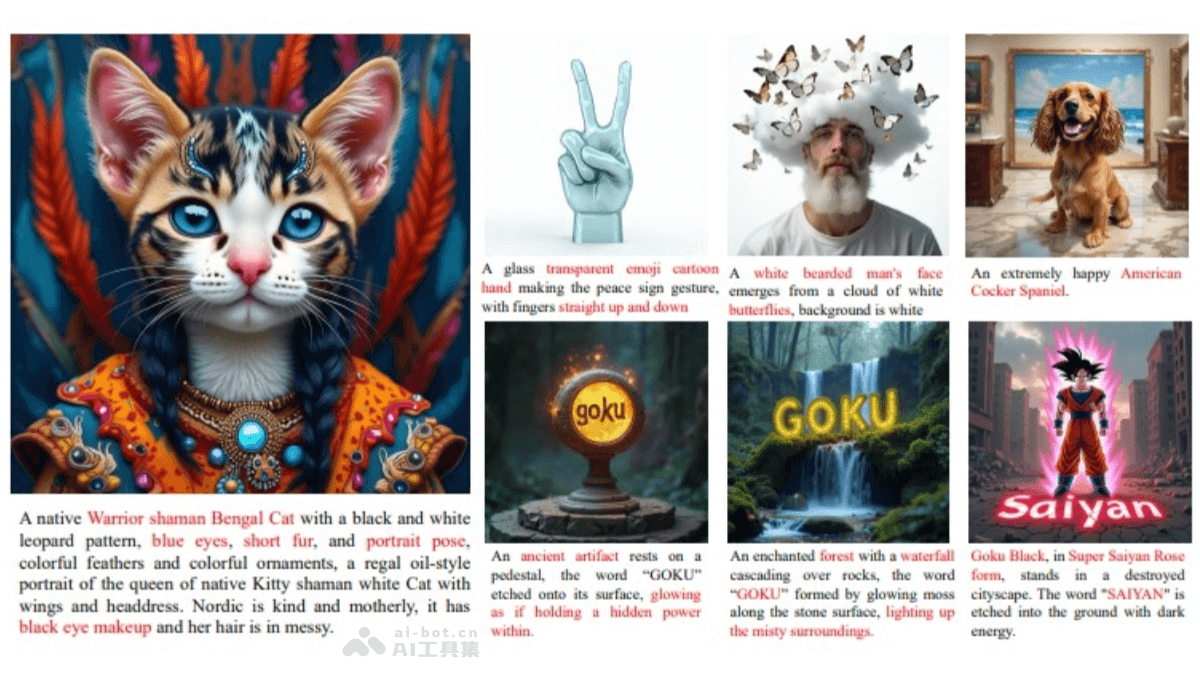

- Text-to-Image: Generates detailed images from text descriptions.

- Text-to-Video: Creates smooth, high-quality videos based on text inputs.

- Image-to-Video: Transforms static images into dynamic videos, maintaining visual consistency.

- Advertising Video Generation (Goku+): Reduces advertising video production costs by 100 times, with stable movements and rich expressions.

- Virtual Digital Human Video Generation: Produces realistic videos of virtual characters, ideal for virtual hosts and customer service.

- Multimodal Generation: Handles complex spatiotemporal dependencies within images and videos through shared latent space and full attention mechanisms.

Technical Principles

- Image-Video Joint VAE: Compresses image and video inputs into a shared latent space for unified processing.

- Transformer Architecture: Includes 2B and 8B parameter models for handling complex dependencies.

- Rectified Flow Formula: Trains via linear interpolation, offering faster convergence than traditional diffusion models.

- Large-scale Dataset: Built on 36 million videos and 160 million images, ensuring high-quality outputs.

- Efficient Training Infrastructure: Utilizes parallel strategies, fault-tolerant mechanisms, and ByteCheckpoint technology for stability and efficiency.

Use Cases

- Advertising: Generate high-quality, low-cost advertising videos.

- Content Creation: Create animations, natural landscapes, and other video content.

- Education: Develop educational videos and training courses.

- Entertainment: Produce video content for movies, TV shows, and animation.

Getting Started

- Project Website: https://saiyan-world.github.io/goku/

- GitHub Repository: https://github.com/Saiyan-World/goku

- HuggingFace Model Library: https://huggingface.co/datasets/saiyan-world/Goku-MovieGenBench

- arXiv Technical Paper: https://arxiv.org/pdf/2502.04896

Model Capabilities

Model Type

multimodal

Supported Tasks

Text-To-Video

Image-To-Video

Text-To-Image

Advertising Video Generation

Virtual Digital Human Video Generation

Tags

Video Generation

Image Generation

Multimodal AI

Advertising

Content Creation

AI Model

ByteDance

HKU

Transformer

Rectified Flow

Usage & Integration

License

Open Source

Screenshots & Images

Primary Screenshot

Additional Images

Stats

97

Views

0

Favorites