Grok-1

by xAIGrok-1 is a large language model developed by xAI, Elon Musk's AI startup, featuring 314 billion parameters and designed for various natural language processing tasks.

What is Grok-1?

Grok-1 is a large language model developed by xAI, an AI startup under Elon Musk. It is a Mixture of Experts (MoE) model with 314 billion parameters, making it the largest open-source language model available. The development and training of Grok-1 follow open-source principles, with its weights and network architecture publicly available under the Apache 2.0 license, allowing free use, modification, and distribution for both personal and commercial purposes.

Key Features

- 314 Billion Parameters: Grok-1 is one of the largest open-source language models, offering extensive capabilities in natural language processing.

- Mixture of Experts (MoE): This design combines multiple expert networks to improve model efficiency and performance.

- Open Source: Grok-1's weights and architecture are available under the Apache 2.0 license, enabling free use and modification.

Technical Details

- Base Model and Training: Grok-1 is trained on a large amount of text data without fine-tuning for any specific task, making it a general-purpose language model applicable to various natural language processing tasks.

- Number of Parameters: Grok-1 has 314 billion parameters, making it the largest open-source language model available.

- Mixture of Experts (MoE): Grok-1 employs a Mixture of Experts design, which combines multiple expert networks to improve model efficiency and performance.

- Activation Parameters: Grok-1 has 86 billion activation parameters, more than Llama-2's 70B parameters, indicating its potential in handling language tasks.

- Embedding and Positional Embedding: Grok-1 uses rotary embeddings instead of fixed positional embeddings, a method for processing sequential data that enhances the model's ability to handle long texts.

- Transformer Layers: The model contains 64 Transformer layers, each consisting of a decoder layer with a multi-head attention block and a dense block.

- Quantization: Grok-1 also provides 8-bit quantization for some weights, which helps reduce the model's storage and computational requirements, making it more suitable for environments with limited resources.

Getting Started

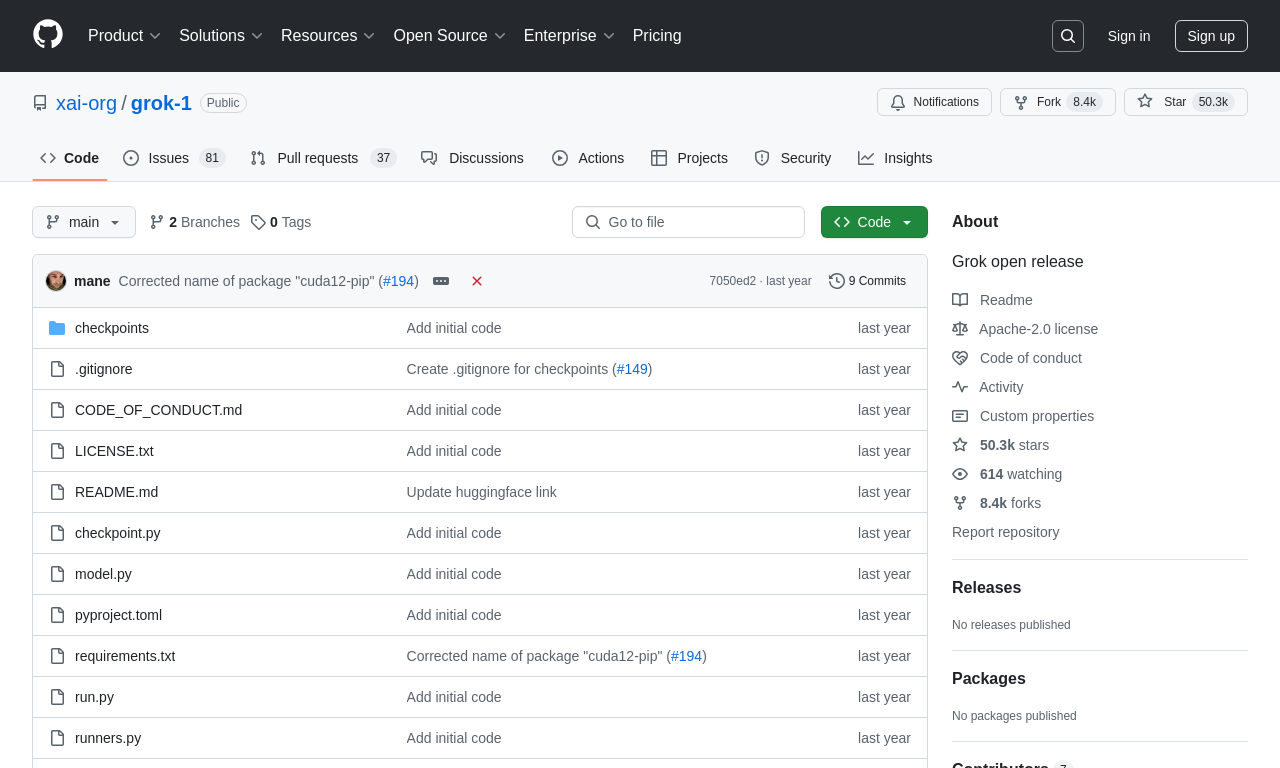

To get started with Grok-1, visit the official GitHub repository: https://github.com/xai-org/grok-1.

Use Cases

Grok-1 is designed to be the engine behind the Grok chatbot, used for natural language processing tasks including Q&A, information retrieval, creative writing, and coding assistance.

Model Capabilities

Model Type

language

Supported Tasks

Q&a

Information Retrieval

Creative Writing

Coding Assistance

Tags

Large Language Model

Open Source

Natural Language Processing

Mixture of Experts

Transformer

AI Research

Machine Learning

Language Understanding

Text Generation

OpenAI Alternative

Usage & Integration

Pricing

free

License

Open Source

Apache-2.0

Requirements

- GPU with 628GB memory

Screenshots & Images

Primary Screenshot

Additional Images

Stats

404

Views

0

Favorites