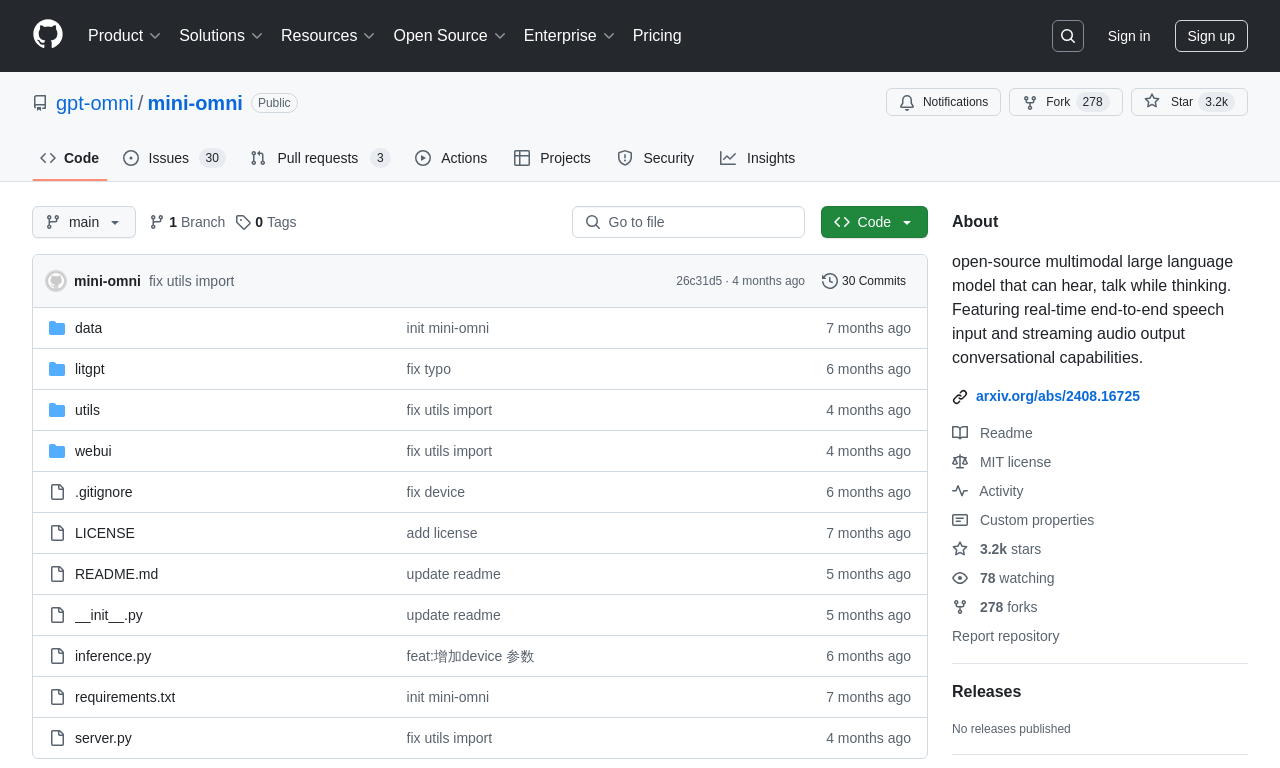

Mini-Omni

by gpt-omniMini-Omni is an open-source end-to-end voice dialogue model capable of real-time voice input and output, enabling "think while speaking" functionality in conversations.

What is Mini-Omni?

Mini-Omni is an open-source, end-to-end voice dialogue model designed for real-time voice input and output, enabling "think while speaking" functionality in conversations. It eliminates the need for additional ASR or TTS systems by directly processing voice-to-voice dialogue. The model leverages text-guided voice generation and batch parallel strategies to enhance performance and maintain natural language capabilities.

Main Features of Mini-Omni

- Real-Time Voice Interaction: Enables seamless, end-to-end voice dialogue without relying on external ASR or TTS systems.

- Parallel Text and Voice Generation: Simultaneously generates text and voice output during inference, guided by text information for natural and fluent interactions.

- Batch Parallel Inference: Improves inference capability during streaming audio output, ensuring diverse and accurate voice responses.

- Audio Language Modeling: Converts continuous speech signals into discrete audio tokens, enabling large language models to perform audio modality inference.

- Cross-Modal Understanding: Processes multiple input modalities, including text and audio, for effective cross-modal interactions.

Technical Principles of Mini-Omni

- End-to-End Architecture: Directly processes audio input to text and audio output without separate ASR and TTS systems.

- Text-Guided Voice Generation: Generates text first, then synthesizes voice based on the text, leveraging the language model's text processing capabilities.

- Parallel Generation Strategy: Simultaneously generates text and audio tokens during inference, maintaining coherent and consistent dialogues.

- Batch Parallel Inference: Processes multiple inputs simultaneously, improving audio generation quality through text generation.

- Audio Encoding and Decoding: Uses an audio encoder (e.g., Whisper) to convert speech signals into discrete audio tokens and an audio decoder (e.g., SNAC) to convert them back into audio.

Project Addresses

- Github Repository: https://github.com/gpt-omni/mini-omni

- HuggingFace Model Library: https://huggingface.co/gpt-omni/mini-omni

- arXiv Technical Paper: https://arxiv.org/pdf/2408.16725

Application Scenarios

- Smart Assistants and Virtual Assistants: Helps users perform tasks like setting reminders, querying information, and controlling devices through voice interaction.

- Customer Service: Provides 24/7 automated support, handling inquiries, solving problems, and executing transactions.

- Smart Home Control: Controls home devices such as lights, temperature, and security systems via voice commands.

- Education and Training: Offers voice-interactive learning experiences for subjects like languages and history.

- In-Car Systems: Integrates into in-car infotainment systems for voice-controlled navigation, music playback, and communication.

Model Capabilities

Model Type

multimodal

Supported Tasks

Real-Time Voice Interaction

Text And Voice Generation

Cross-Modal Understanding

Audio Language Modeling

Batch Parallel Inference

Tags

Voice Dialogue

Real-Time Interaction

Open-Source

Multimodal AI

Voice Technology

AI Models

Conversational AI

Text-Guided Generation

Speech Synthesis

Cross-Modal Understanding

Usage & Integration

Pricing

free

License

Open Source

Screenshots & Images

Primary Screenshot

Additional Images

Stats

77

Views

0

Favorites