Phi-3.5

by MicrosoftPhi-3.5 is Microsoft's latest AI model series, designed for lightweight inference, expert mixture systems, and multimodal tasks.

What is Phi-3.5?

Phi-3.5 is a new generation AI model series launched by Microsoft, designed for lightweight inference, expert mixture systems, and multimodal tasks. It includes three specialized models: Phi-3.5-mini-instruct, Phi-3.5-MoE-instruct, and Phi-3.5-vision-instruct. These models are optimized for various tasks and support a 128k context length, making them suitable for handling long text data and complex multilingual scenarios.

Key Features and Capabilities

Phi-3.5-mini-instruct

- Parameter Count: Approximately 3.82 billion parameters.

- Design Purpose: Designed for instruction following and fast inference tasks.

- Context Support: Supports 128k token context length.

- Use Cases: Ideal for environments with limited memory or computational resources. Capable of tasks like code generation, math problem solving, and logic-based reasoning.

- Performance: Excels in multilingual and multi-turn dialogue tasks. Outperforms similar-sized models in benchmark tests.

Phi-3.5-MoE-instruct

- Parameter Count: Approximately 41.9 billion parameters.

- Architecture: Uses a mixture of experts (MoE) architecture.

- Context Support: Supports 128k token context length.

- Performance: Excels in code, math, and multilingual understanding. Often outperforms larger models in specific benchmarks.

Phi-3.5-vision-instruct

- Parameter Count: Approximately 4.15 billion parameters.

- Functionality: Integrates text and image processing.

- Use Cases: Suitable for general image understanding, OCR, chart and table understanding, and video summarization.

- Context Support: Supports 128k token context length.

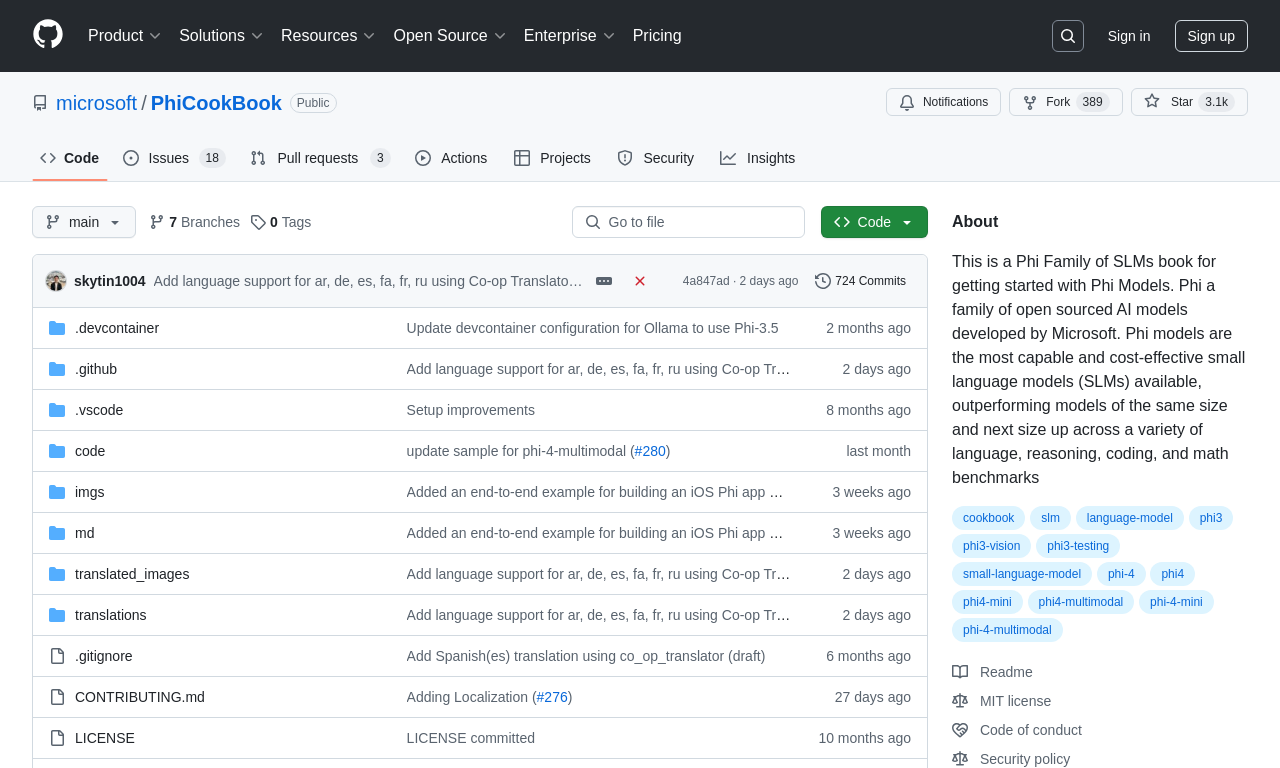

Getting Started

- Environment Setup: Ensure the development environment meets the hardware and software requirements.

- Model Acquisition: Visit the Hugging Face model repository to download the model code.

- Dependency Installation: Install the required libraries as per the model's documentation.

- Model Loading: Load the Phi-3.5 model using an API or code snippet.

- Data Processing: Prepare input data and preprocess it according to the model's requirements.

- Model Configuration: Configure model parameters based on the application scenario.

- Task Execution: Use the model to perform tasks like text generation, question answering, or text classification.

Application Scenarios

- Phi-3.5-mini-instruct: Compact and efficient, suitable for fast text processing and code generation in embedded systems and mobile applications.

- Phi-3.5-MoE-instruct: Provides deep reasoning for data analysis and multilingual text, suitable for interdisciplinary research and professional fields.

- Phi-3.5-vision-instruct: Advanced multimodal processing capabilities, suitable for automatic image annotation, video surveillance, and in-depth analysis of complex visual data.

Model Capabilities

Model Type

multimodal

Supported Tasks

Text Generation

Question Answering

Code Generation

Math Problem Solving

Image Understanding

Ocr

Video Summarization

Tags

AI Models

Machine Learning

Natural Language Processing

Multimodal AI

Lightweight Inference

Expert Mixture Systems

Multilingual Processing

Multi-turn Dialogue

Open Source

Benchmark Performance

Usage & Integration

Pricing

free

API Access

Available

License

Open Source

MIT

Requirements

- Python environment

- Transformers library

- PyTorch or TensorFlow

Screenshots & Images

Primary Screenshot

Additional Images

Stats

157

Views

0

Favorites