BrushNet

What is BrushNet?

BrushNet is a plug-and-play image inpainting model developed by Tencent's ARC Lab and researchers from the University of Hong Kong. It is based on a diffusion model and uses a dual-branch architecture to effectively handle masked areas in images. One branch focuses on extracting pixel-level features from the masked image, while the other branch is responsible for generating the image. This design allows BrushNet to integrate key mask information in a hierarchical manner, ensuring high-quality restoration while maintaining the original image's coherence.

Compared to previous image inpainting methods (such as Blended Latent Diffusion, Stable Diffusion Inpainting, HD-Painter, and PowerPaint), BrushNet demonstrates superior coherence in terms of style, content, color, and prompt alignment.

BrushNet Official Website

- Official Project Page: https://tencentarc.github.io/BrushNet/

- GitHub Repository: https://github.com/TencentARC/BrushNet

- arXiv Research Paper: https://arxiv.org/abs/2403.06976

Features of BrushNet

- Repair Different Types of Images: BrushNet can repair images of various scenes, such as humans, animals, indoor and outdoor scenes, and different styles, including natural images, pencil drawings, anime, illustrations, and watercolors.

- Pixel-Level Repair: BrushNet can identify and process masked areas in images, performing precise repairs at the pixel level to ensure seamless integration with the original image.

- Preserve Unmasked Areas: Through hierarchical control and specific blur fusion strategies, BrushNet can preserve unmasked areas during the repair process, avoiding unnecessary changes to the original image content.

- Compatibility with Pre-trained Models: As a plug-and-play model, BrushNet can be combined with various pre-trained diffusion models (such as DreamShaper, epiCRealism, and MeinaMix) to leverage their powerful generative capabilities for repair tasks.

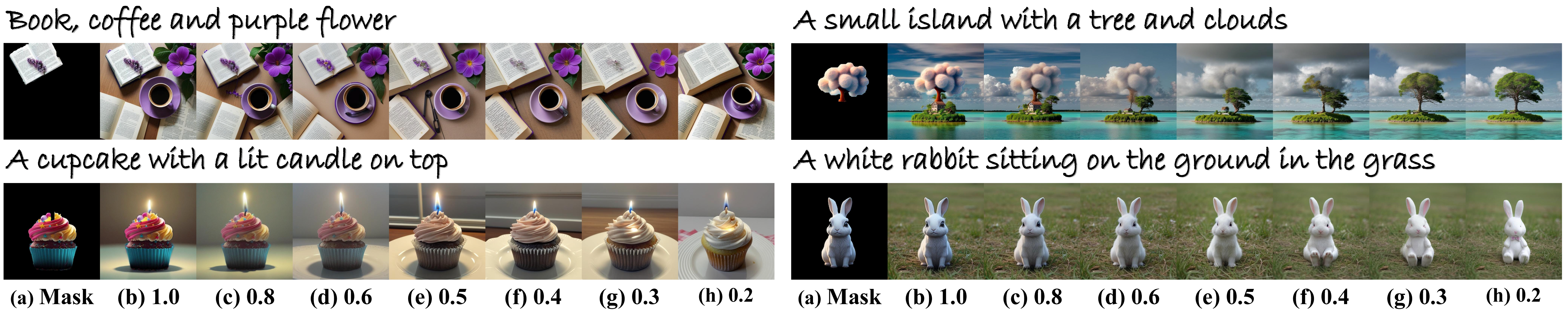

- Flexibility and Control: Users can adjust the model's parameters to control the scale and detail of the repair, including the size of the repair area and the level of detail in the repair content.

How BrushNet Works

BrushNet is based on a diffusion model and uses an innovative dual-branch architecture to perform image inpainting tasks.

Here is a brief overview of how BrushNet works:

- Dual-Branch Architecture: The core of BrushNet is a decomposed dual-branch architecture, where one branch focuses on processing the features of the masked image, while the other branch is responsible for generating the rest of the image.

- Masked Image Feature Extraction: In the mask branch, the model uses a Variational Autoencoder (VAE) to encode the masked image and extract its latent features. These features are then used to guide the image repair process.

- Pre-trained Diffusion Model: In the generation branch, the model uses a pre-trained diffusion model to generate image content. This model has learned how to recover clear images from noise.

- Feature Fusion: The extracted masked image features are gradually fused into the pre-trained diffusion model, allowing for hierarchical control of the repair process.

- Denoising and Generation: During the reverse diffusion process, the model iteratively denoises the image, gradually recovering a clear image from noise. Each step considers the features of the masked image to ensure that the repaired area remains visually consistent with the rest of the original image.

- Blur Fusion Strategy: To better preserve the details of unmasked areas, BrushNet employs a blur fusion strategy. This means that when fusing the masked area with the generated area, a blurred mask is used to reduce hard edges and unnatural transitions.

- Output Repaired Image: Finally, the model outputs a repaired image where the masked area is naturally and coherently filled, while the original content of the unmasked area is preserved.

Features & Capabilities

Getting Started

Screenshots & Images