CAD-MLLM

CAD-MLLM is a system that generates parametric CAD models from various inputs like text descriptions, images, and point clouds using command sequences and large language models.

What is CAD-MLLM?

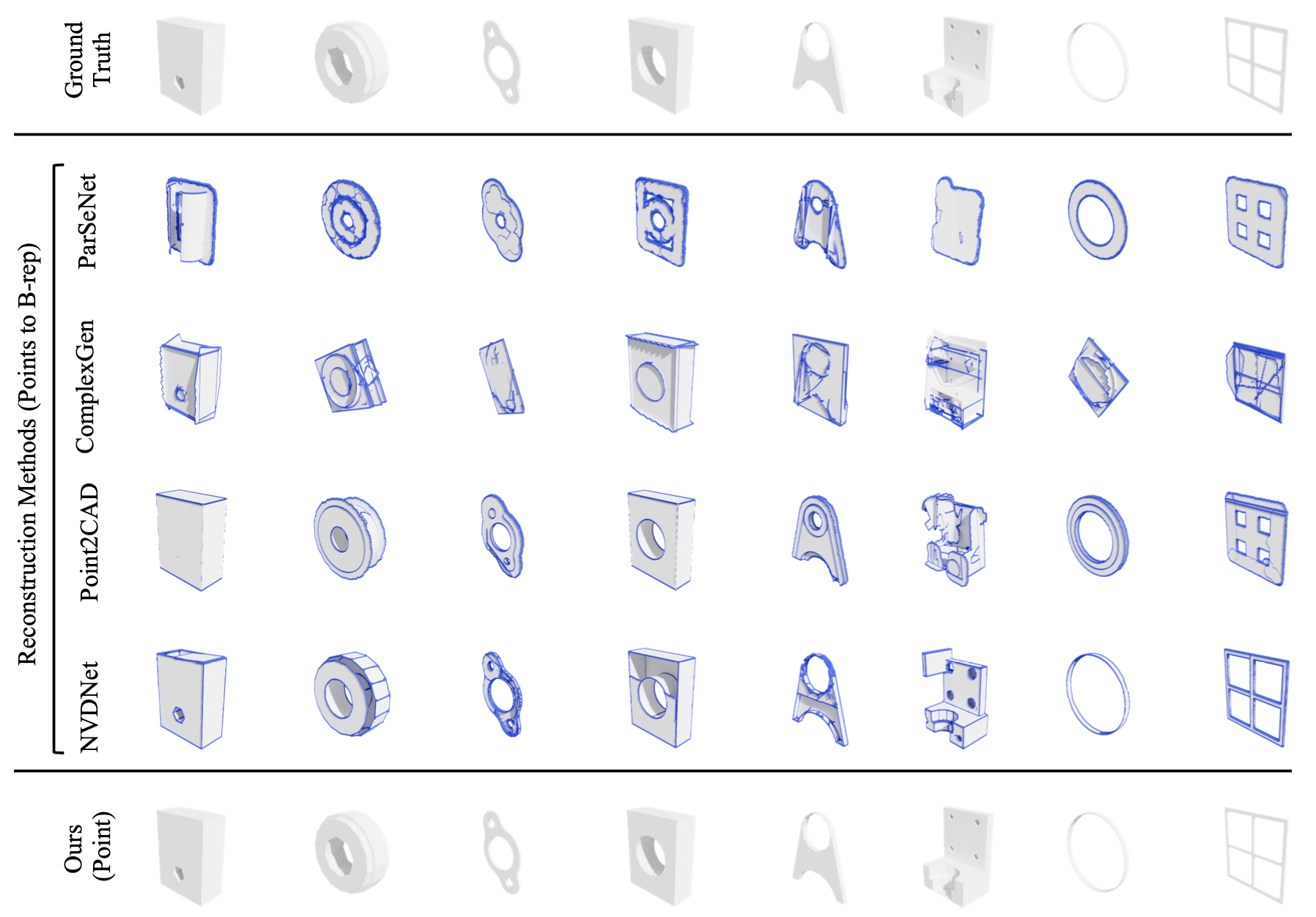

CAD-MLLM is a computer-aided design (CAD) model generation system developed by ShanghaiTech University, Transcengram, DeepSeek AI, and the University of Hong Kong. It generates parametric CAD models based on multiple user inputs such as text descriptions, images, point clouds, or combinations thereof. The system uses command sequences and large language models (LLMs) to align and process multimodal data, constructing complete CAD models. CAD-MLLM introduces a large-scale multimodal dataset called Omni-CAD and new evaluation metrics to comprehensively assess the topological quality and surface closure of generated models. It outperforms existing methods and demonstrates high robustness to data defects.

Main Features of CAD-MLLM

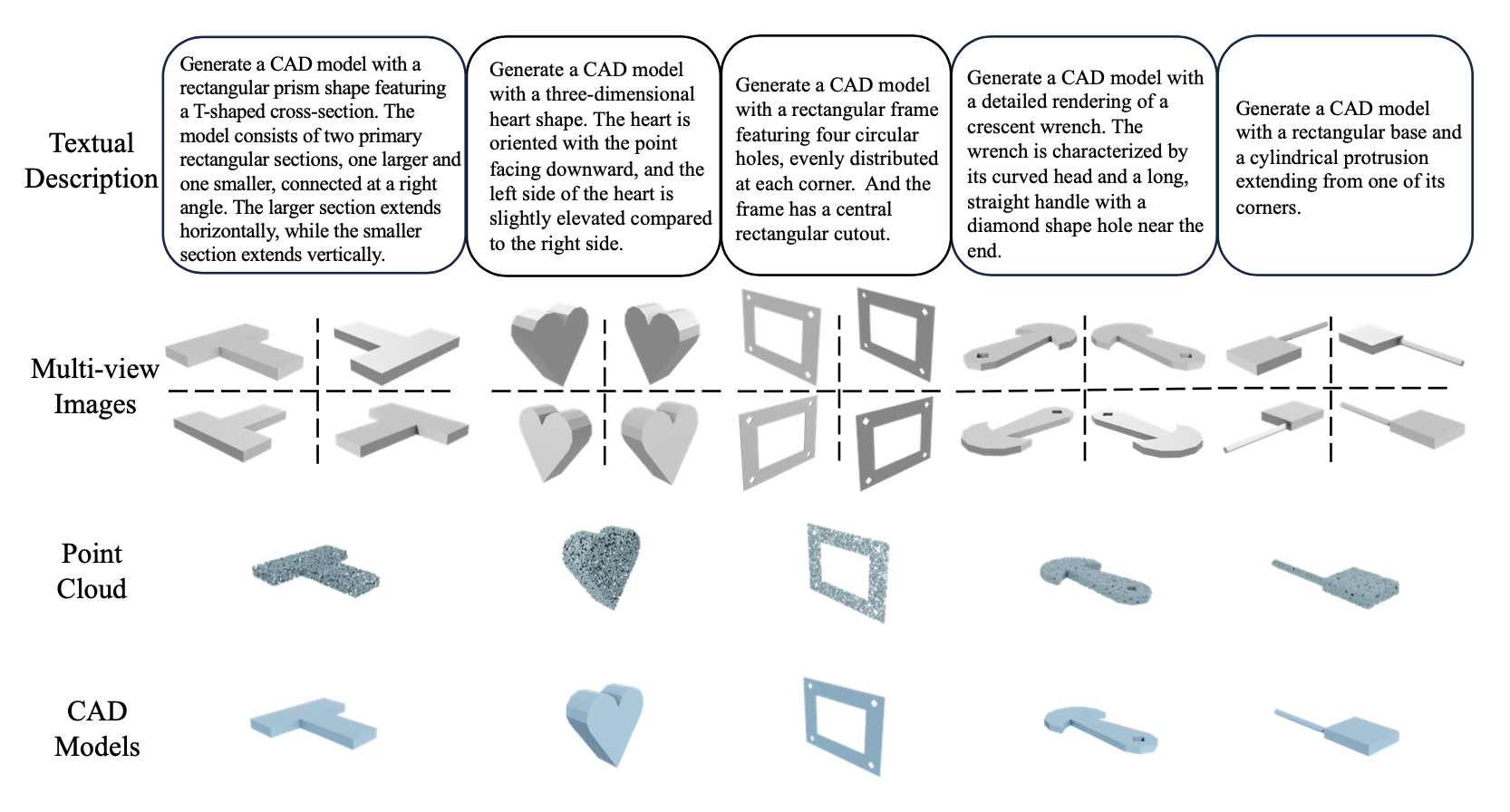

- Multimodal Input Processing: Processes various input forms including text descriptions, images, and point clouds to generate CAD models.

- Parametric CAD Model Generation: Generates parametric CAD models that users can edit and adjust.

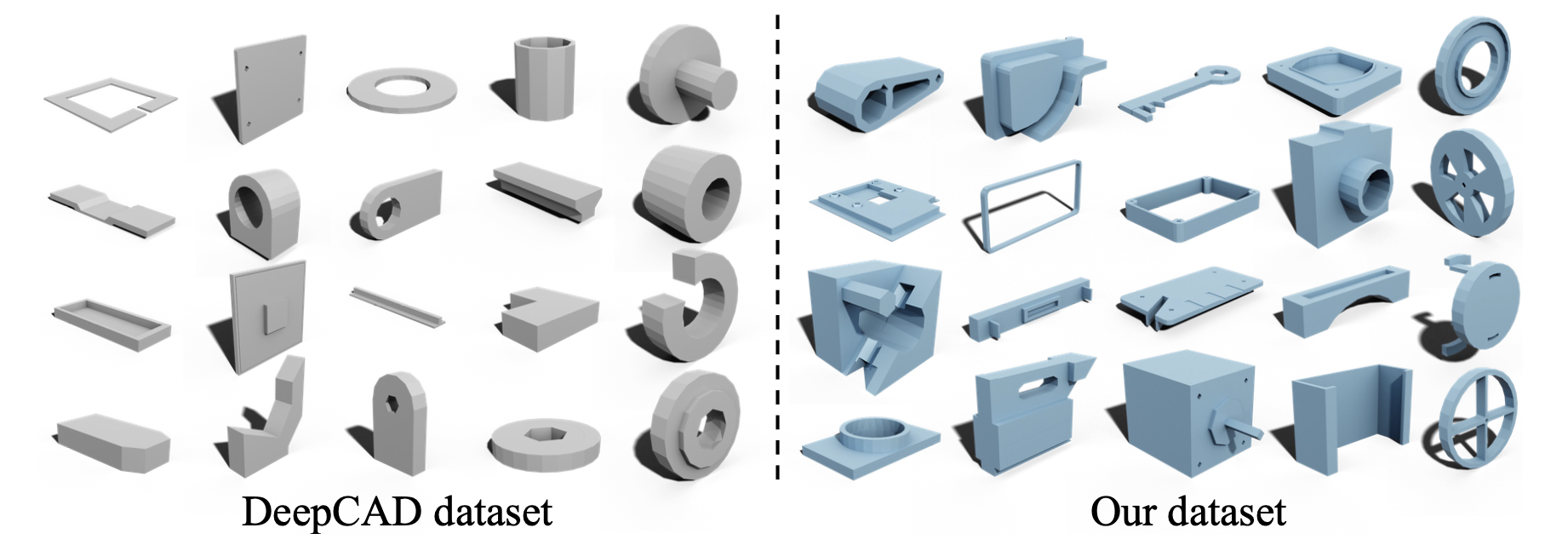

- Dataset Construction and Annotation: Introduces the Omni-CAD dataset, which includes text descriptions, multi-view images, point clouds, and corresponding CAD command sequences.

- Innovative Evaluation Metrics: Introduces new evaluation metrics to assess the topological quality and surface closure of generated CAD models.

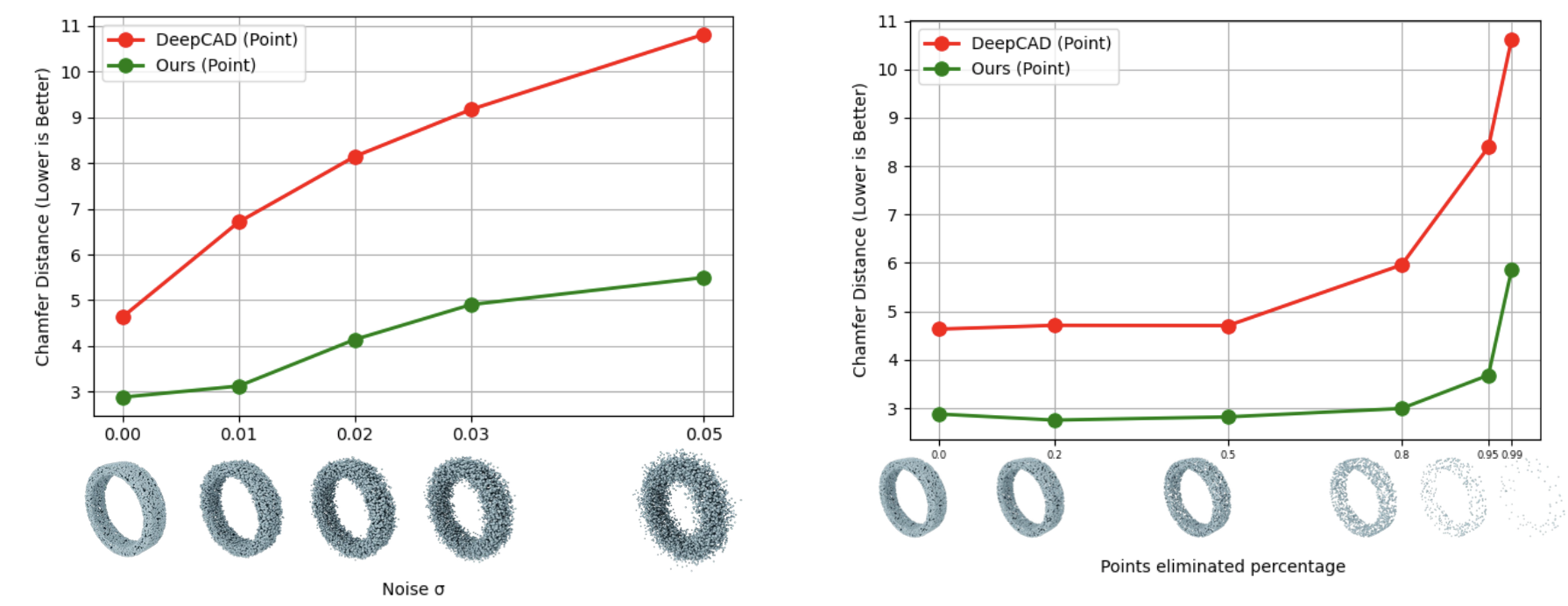

- Robustness: Demonstrates high robustness when dealing with noise and missing data.

- Interactive Design: Allows users to design CAD models easily based on simple instructions and illustrations, enabling non-experts to realize their design ideas.

Technical Principles of CAD-MLLM

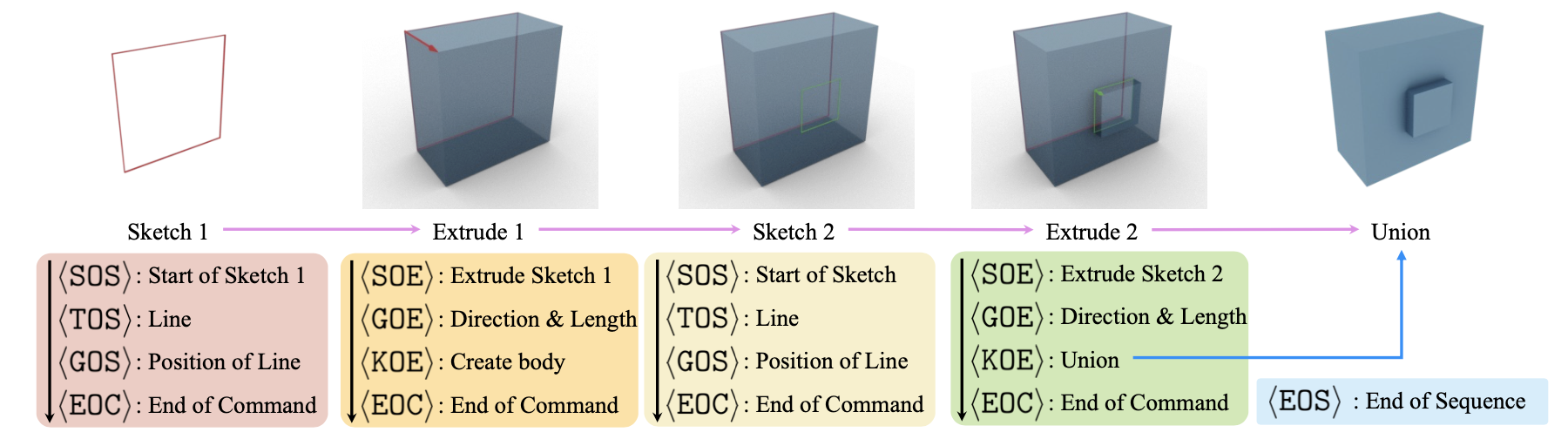

- Command Sequence Representation: Uses CAD model command sequences, vectorizing them to form data streams suitable for large language models (LLMs).

- Multimodal Data Alignment: Uses advanced LLMs to align different modal data and vector representations of CAD models, enabling the model to understand and process various inputs.

- Network Architecture: The network architecture includes modules for visual data alignment, point data alignment, and large language models, supporting cross-modal input.

- Feature Space Sharing: Non-text inputs are first processed based on a frozen encoder, then aligned in the shared large language model (LLM) feature space using a projection layer.

- Low-Rank Adaptation (LoRA) Fine-Tuning: Integrates prompts with multimodal embeddings and applies low-rank adaptation (LoRA) technology to fine-tune the LLM, generating accurate CAD models.

- Data Augmentation Methods: Proposes data annotation processes and data augmentation methods to generate the new multimodal conditional CAD dataset Omni-CAD.

Project Address of CAD-MLLM

- Project Website: cad-mllm.github.io

- arXiv Technical Paper: https://arxiv.org/pdf/2411.04954

Application Scenarios of CAD-MLLM

- Industrial Design and Manufacturing: Designers and engineers can quickly generate and modify complex industrial product CAD models, accelerating product development processes.

- Architecture and Engineering: Architects and structural engineers can generate precise CAD drawings from site photos or terrain data, improving design and planning efficiency.

- Automotive Industry: Automotive manufacturers can generate precise CAD models of car parts from concept sketches or descriptions, optimizing design and manufacturing processes.

- Aerospace: In the aerospace field, CAD models of aircraft and spacecraft parts and structures can be generated from complex design requirements and performance parameters.

- Education and Training: Students and novices can lower the learning curve and improve teaching effectiveness.

Features & Capabilities

Categories

CAD

AI

Multimodal

LLM

Getting Started

Screenshots & Images

Additional Images