OpenScholar

by University of Washington and Allen Institute for AIOpenScholar is a retrieval-augmented language model designed to help scientists answer questions by retrieving and synthesizing relevant scientific literature.

What is OpenScholar?

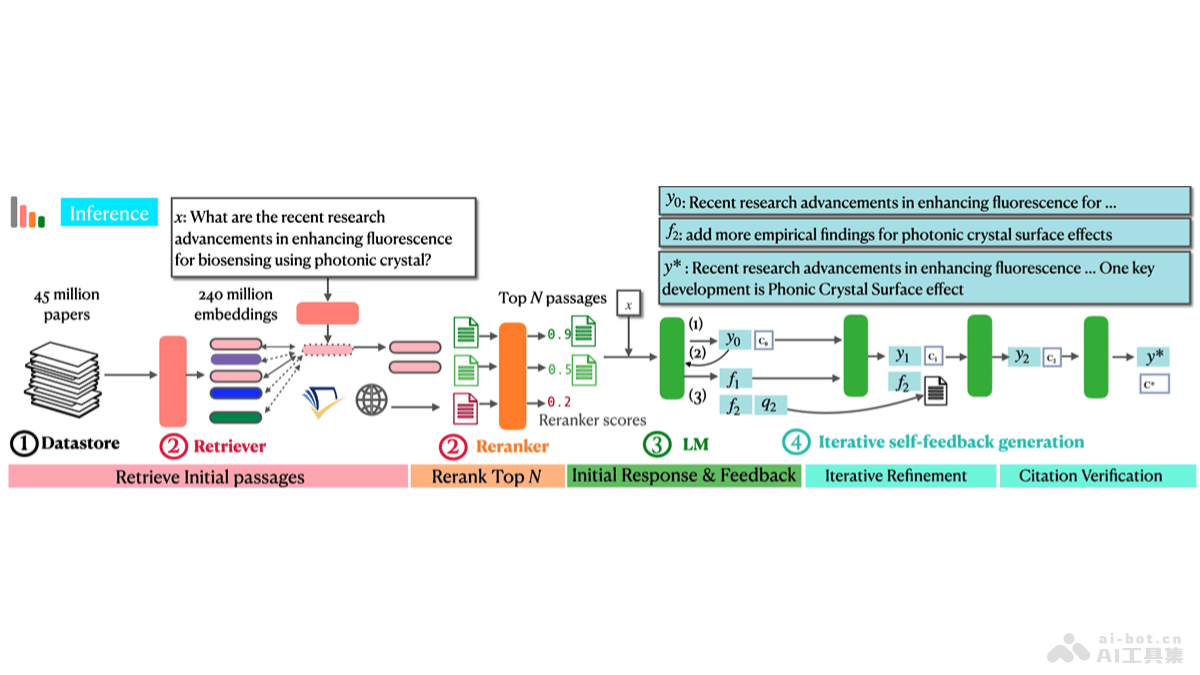

OpenScholar is a retrieval-augmented language model (LM) developed by the University of Washington and Allen AI Institute. It helps scientists answer questions by retrieving and synthesizing relevant scientific literature. The system uses a large-scale scientific paper database, custom retrievers and re-rankers, and an optimized 8B parameter language model to generate accurate, literature-based answers. OpenScholar surpasses existing proprietary and open-source models in providing factual answers and accurate citations, with OpenScholar-8B achieving 5% higher correctness than GPT-4o and 7% higher than PaperQA2 on the ScholarQABench. All related code and data are open-source, supporting and accelerating scientific research.

Main Features of OpenScholar

- Literature Retrieval and Synthesis: Retrieves a vast amount of scientific literature and synthesizes relevant information to answer user queries.

- Citation-Based Answers: Generates answers with accurate citations, enhancing reliability and transparency.

- Cross-Disciplinary Application: Applicable across various scientific fields, including computer science, biomedicine, physics, and neuroscience.

- Improved Retrieval Efficiency: Utilizes specialized retrievers and re-rankers to enhance the efficiency and accuracy of retrieving relevant scientific literature.

- Self-Feedback Iteration: Iteratively improves answers using a self-feedback mechanism, enhancing answer quality and citation completeness.

Technical Principles of OpenScholar

- Data Storage (OpenScholar Datastore): Contains over 45 million scientific papers and their corresponding 237 million paragraph embeddings, providing the foundational data for retrieval.

- Specialized Retrievers and Re-rankers: Retrievers and re-rankers trained on scientific literature datastores to identify and rank relevant literature passages.

- 8B Parameter Language Model: An 8B parameter large language model optimized for scientific literature synthesis tasks, balancing performance and computational efficiency.

- Self-Feedback Generation: Iteratively refines model outputs based on natural language feedback during inference, potentially involving additional literature retrieval to improve answer quality and fill citation gaps.

- Iterative Retrieval Augmentation: After generating an initial answer, the model produces feedback to guide further retrieval, iteratively improving the answer until all feedback is addressed.

Project Links for OpenScholar

- Project Website: allenai.org/blog/openscholar

- GitHub Repository: https://github.com/AkariAsai/OpenScholar

- HuggingFace Model Library: https://huggingface.co/collections/OpenScholar/openscholar-v1-67376a89f6a80f448da411a6

- arXiv Technical Paper: https://arxiv.org/pdf/2411.14199

Application Scenarios of OpenScholar

- Research Assistance: Helps researchers quickly access the latest research findings, keeping them updated in their field.

- Literature Review: Assists authors in integrating and summarizing a large volume of literature when writing academic papers or reports, improving writing efficiency.

- Interdisciplinary Research: As OpenScholar covers multiple scientific fields, it helps researchers explore connections and intersections between different disciplines.

- Education and Learning: Aids students and teachers in learning and teaching by providing in-depth literature analysis and summaries.

- Technology Monitoring: Enables R&D departments in companies to monitor technological trends, especially in fast-evolving fields.

Features & Capabilities

What You Can Do

Literature Retrieval

Literature Synthesis

Citation-Based Answers

Cross-Disciplinary Research

Education And Learning

Categories

Academic Search

Language Model

Scientific Literature

Open Source

Research Assistance

Literature Review

Interdisciplinary Research

Education

Technology Monitoring

Example Uses

- Research Assistance

- Literature Review

- Interdisciplinary Research

- Education and Learning

- Technology Monitoring

Getting Started

Pricing

free

Screenshots & Images

Primary Screenshot

Additional Images